There have traditionally been two classes of hypervisor: Type-1 and Type-2. This requires software code that simulates the desired underlying hardware environment to fool your software into thinking it’s actually running somewhere else.Įmulation can be relatively simple to implement, but it will nearly always come with a serious performance penalty. While virtualization seeks to divide existing hardware resources among multiple users, the goal of emulation is to make one particular hardware/software environment imitate one that doesn’t actually exist, so that users can launch processes that wouldn’t be possible natively. Though often playing a support role in virtualization deployments - emulation works quite differently. In fact, when implemented well, virtualization allows most software code to run exactly the way it normally would without any need for trapping. One very successful solution has been to introduce new instruction sets into CPUs that create a so-called “Ring -1” that will act as Ring 0 and allow a guest OS to operate without having any impact on other, unrelated operations. Simply adding a new software layer to provide this level of coordination will add significant latency to just about every aspect of system performance. On virtualized hardware, such requests can usually be caught by the hypervisor, adapted to the virtual environment, and passed back to the virtual machine. When working within a hardware environment without SVM or VT-x virtualization, this is done through a process known as trap and emulate and binary translation. However, since you can’t give multiple virtual machines running on a single physical computer equal access to ring 0 without asking for big trouble, there must be a virtual machine manager (or “hypervisor”) whose job it is to effectively redirect requests for resources like memory and storage to their virtualized equivalents. Normally, only the host operating system kernel has any chance of accessing instructions kept in Ring 0. Under non-virtual conditions, x86 architectures strictly control which processes can operate within each of four carefully defined privilege layers (described as Ring 0 through Ring 3). The kind of adaptability that virtualization offers allows scripts to add or remove virtual machines in seconds…rather than the weeks it might take to purchase, provision, and deploy a physical server.

Virtualization also allows new virtual machines to be provisioned and run almost instantly, and then destroyed as soon as they are no longer needed.įor large applications supporting constantly changing business needs, the ability to quickly scale up and down can spell the difference between survival and failure. Configured properly, virtually isolated resources can provide more secure applications with no visible connectivity between environments. Each virtual device is represented within its software and user environments as an actual, standalone entity. Virtualization allows physical compute, memory, network, and storage (“core four”) resources to be divided between multiple virtual entities. Wherever possible, efficiently executes operations directly on underlying hardware resources, including CPUs.Allows complete client control over virtualized system hardware.Is equivalent to that of a physical machine so that software access to hardware resources and drivers should be indistinguishable from a non-virtualized experience.Goldberg in a paper from 1974 (“Formal Requirements for Virtualizable Third Generation Architectures” - Communications of the ACM 17 (7): 412–421), successful virtualization must provide an environment that: Safely testing new configurations or full applications before their release can also be complicated, expensive, and time-consuming.Īs envisioned by pioneering researchers Gerald J. The cost and complexity of building and launching a single physical server mean that effectively adding or removing resources to quickly meet changing demand is difficult or, in some cases, impossible. ĭespite having access to ever more efficient and powerful hardware, operations that are run directly on traditional physical (or bare-metal) servers unavoidably face significant practical limits.

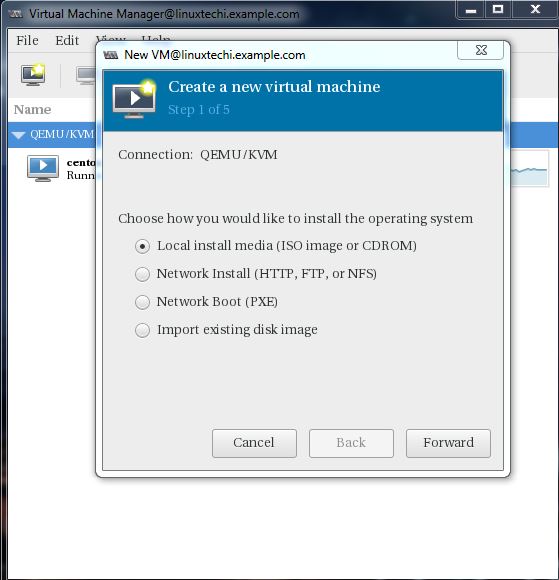

Excerpted from my book: Teach Yourself Linux Virtualization and High Availability: prepare for the LPIC-3 304 certification exam - also available from my Bootstrap-IT site.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed